Freddy Lecue | On The Role Of Knowledge Graphs In Explainable Machine Learning

KGC 2021 Conference, Workshops and Tutorials

•

20m

Machine Learning (ML), as one of the key drivers of Artificial Intelligence, has demonstrated disruptive results in numerous industries. However one of the most fundamental problems of applying ML, and particularly Artificial Neural Network models, in critical systems is its inability to provide a rationale for their decisions. For instance a ML system recognizes an object to be a warfare mine through comparison with its similar observations. No human-transposable rationale is given, mainly because common sense knowledge or reasoning is out-of-scope of ML systems. We present how knowledge graphs could be applied to expose more human-understandable machine learning decisions, and present an asset, combining ML and knowledge graphs to expose a human-like explanation when recognizing an object of any class in a knowledge graph of 4,233,000 resources.

Freddy Lecue of Thales Canada, presents what he and his team have been working on. After trying to use machine learning on their project, Freddy explains some of the issues that come with implementing the AI in gathering the information and making decisions based on that information and one of the facts is that sometimes the rationale isn't correct when the AI makes a decision.

Freddy also explains the implementation of the critical system and how users or people are even able to trust a system to make decisions with no rationale is critical to the need of a trustworthy graph. And for this Freddy says knowledge incooperated techniques with underlying knowledge to validate any outcome that we get is one of those solutions. #knowledgegraphs #knowledgegraphconference #knowledgegraphsmachinelearning

Up Next in KGC 2021 Conference, Workshops and Tutorials

-

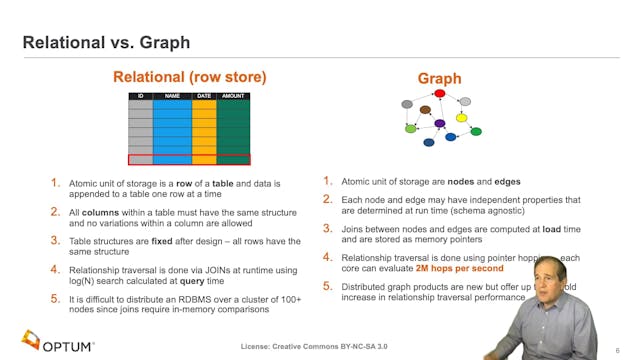

Dan McCreary | Graph Hardware Is Coming!

In this presentation we will show how current general-purpose CPU hardware fails to deliver high performance graph analytics. We show that by doing a detailed analysis of the actual hardware functionally needed by graph queries (pointer jumping), we can redesign hardware that is optimized for fas...

-

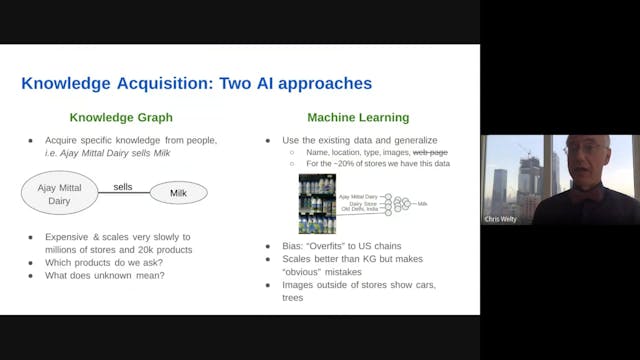

Chris Welty | Shopping Sense: Bringin...

Knowledge Graphs (KGs) continue to penetrate the industrial world after Google's famous "things not strings" was used to explain their acquisition of FreeBase ten years ago. While many KGs exist, they are by and large little more than "entity catalogs", missing entirely the links between those e...

-

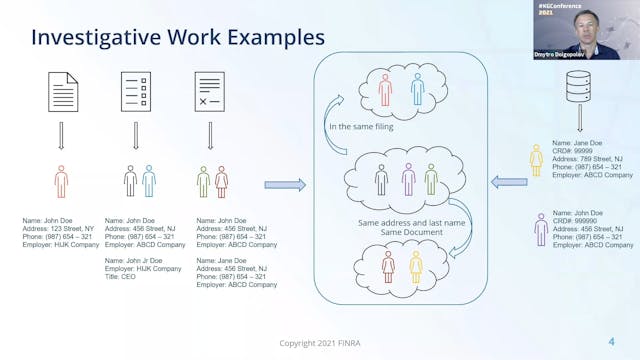

Chen Zhang & Dmytro Dolgopolov | Enti...

During the presentation, we will share our experience in building a knowledge graph leveraging Spark, NLP, and Machine Learning. We will start with explaining the business problems and challenges. Then walk through our data pipeline, including text analytics processes, name similarity solutions, ...